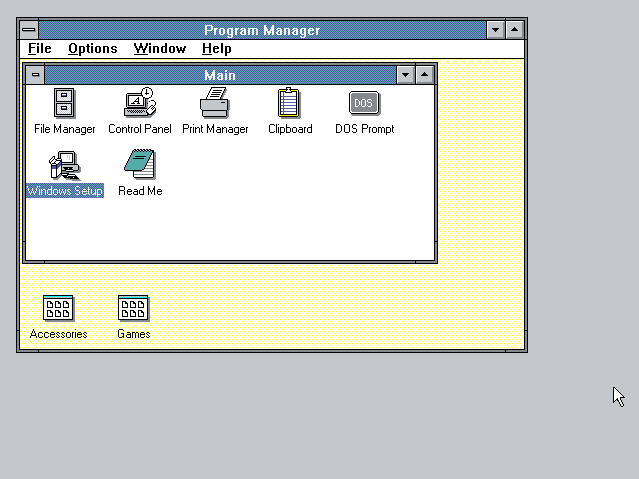

In the old days of technology, screen resolution was not much of an issue. Windows came with a few preset options, and to get higher resolution, more colors, or both, you would install a driver for your graphics card. As time passed, you could also choose better video cards and monitors. Today, we have many options regarding displays, their quality, and the supported resolutions. In this article, I’ll take you through a bit of history and explain all the essential concepts, including common acronyms for screen resolution sizes, like 1080p, 2K, QHD, or 4K. You’ll also learn about other important elements like the aspect ratio and the screen’s orientation. Let’s get started:

It all started with IBM and CGA

IBM was the company that developed color graphics technology. First came CGA (Color Graphics Adapter), followed by EGA (Enhanced Graphics Adapter) and VGA (Video Graphics Array). Regardless of the capability of your monitor, you would still have to choose from one of the few options available through your graphics card’s drivers. For nostalgia’s sake, here’s how things looked on a once well-known CGA display.

What an image rendered on a CGA display looked like

Image source: Wikipedia

With the advent of high-definition video and the increased popularity of the 16:9 aspect ratio (I’ll explain aspect ratios in a bit), selecting a screen resolution is no longer the simple affair it once was. However, this also means that there are many more options to choose from, with something to suit almost everyone’s preferences. Let’s look at today’s terminology and what it means:

The screen is what by what?

The term “resolution” is incorrect when referring to the number of pixels on a screen. That says nothing about how densely the pixels are clustered. That is covered by another metric called PPI (Pixels Per Inch).

The “resolution” is technically the number of pixels per area unit rather than the total number of pixels. In this article, I’m using the term as commonly understood rather than the absolutely technologically correct usage. Since the beginning, the resolution has been described (accurately or not) by the number of pixels arranged horizontally and vertically on a display. For example, 640 x 480 = 307200 pixels. The graphics card’s capability determined the choices available, which differed from manufacturer to manufacturer.

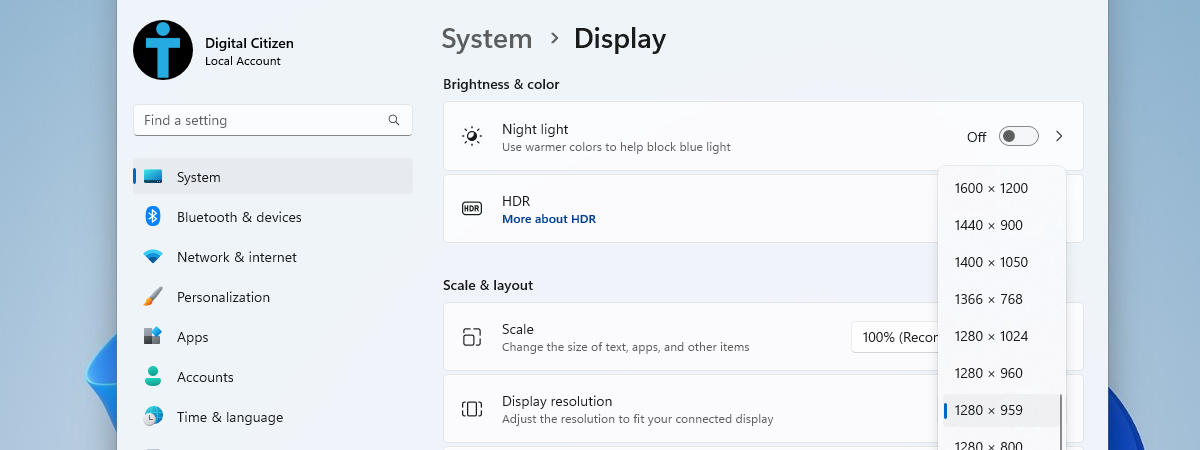

Using an old computer with a low-resolution CRT monitor

The resolutions built into Windows were limited, so if you didn’t have the driver for your graphics card, you would be stuck with the lower-resolution screen that Windows provided. If you’ve watched the old Windows Setup or installed a newer video driver version, you may have seen the 640 x 480 low-resolution screen for a moment or two. It was ugly, but that was the Windows default.

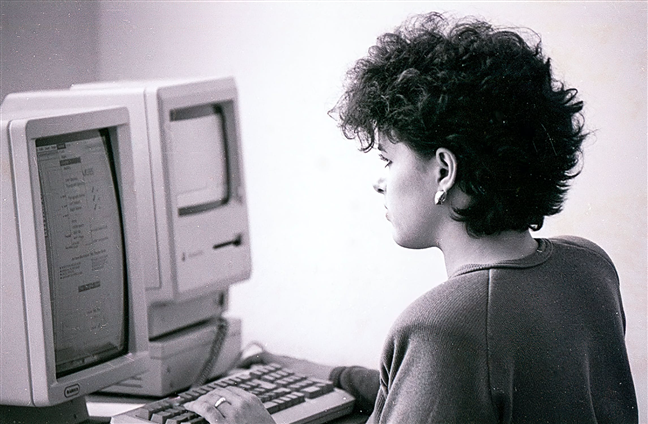

What Windows 3 looked like in the old days

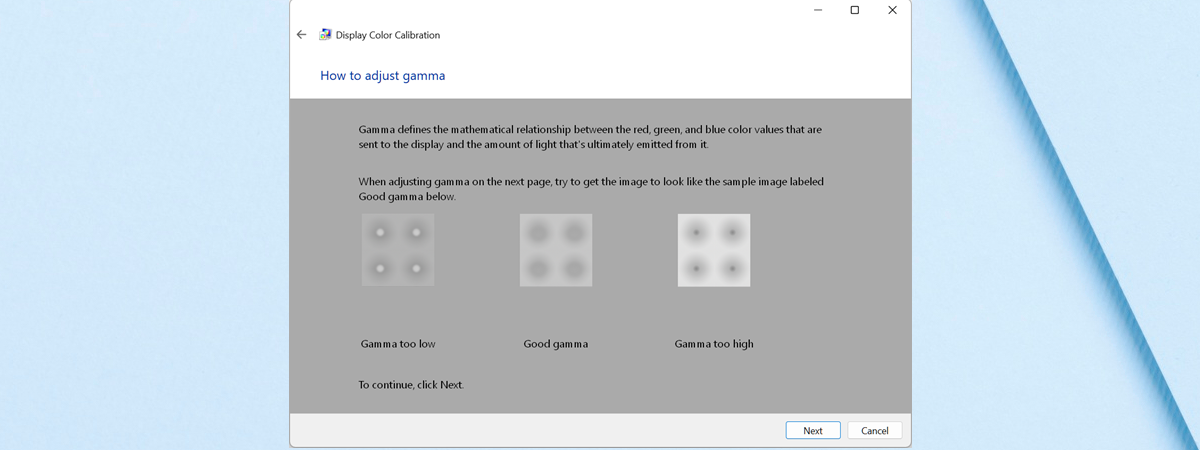

As monitor quality improved, Windows began offering a few more built-in options, but the burden was still mostly on the graphics card manufacturers, especially if you wanted a really high-resolution display. The more recent versions of Windows can detect the default screen resolution for your monitor and graphics card and adjust it accordingly. This doesn’t mean that what Windows chooses is always the best option, but it works, and you can change it after seeing what it looks like, if that’s what you want. For guidance, here’s how to change the screen resolution in Windows 10 and how to modify it in Windows 11.

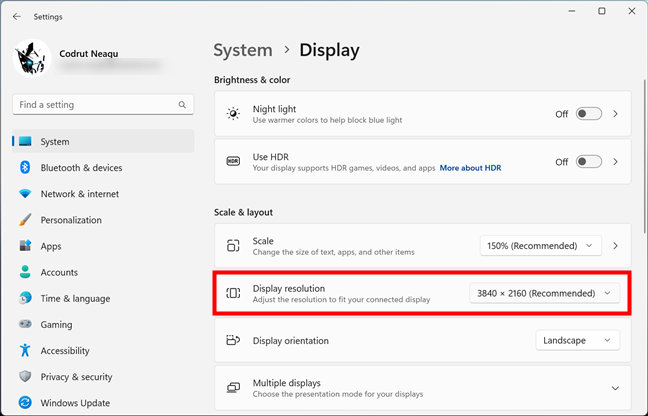

How to change the display resolution in Windows 11

Furthermore, if you are curious to find out the resolution of your screen, you should take a look at the methods shown here: How to check the screen resolution in Windows.

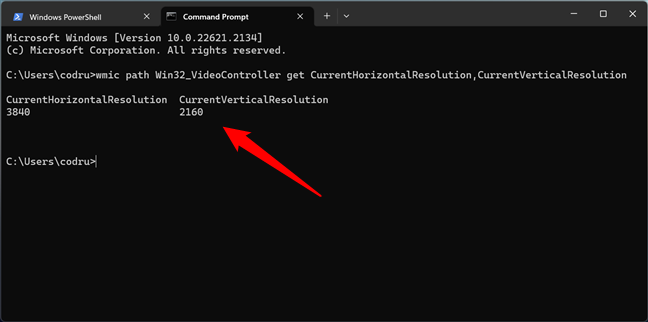

How to use CMD to get the screen resolution

TIP: Do you have a smartphone or tablet made by Apple? You can check your iPad or iPhone resolution too.

Mind your P’s and I’s when it comes to screen resolutions: 720p, 1080i, 1080p, and so on

You may have seen the screen resolution described as something like 720p, 1080i, or 1080p. What does that mean? To begin with, the letters tell you how the picture is “painted” on the monitor. A “p” stands for progressive, and an “i” stands for interlaced. The interlaced scan is a holdover from television and early CRT monitors. The monitor or TV screen has lines of pixels arranged horizontally across it. The lines were relatively easy to see if you got close to an older monitor or TV, but nowadays, the pixels on the screen are so small that they are hard to see even with magnification.

The monitor’s electronics “paint” each screen line by line at a speed faster than the eye can see. An interlaced display paints all the odd lines first, then all the even lines. Since the screen is being painted in alternate lines, flicker has always been a problem with interlaced screens.

Manufacturers have tried to overcome this problem in various ways. The most common way is to increase the number of times an entire screen is painted in a second. This is called the refresh rate. The most common refresh rate was 60 times per second, which is acceptable for most people, but it could be pushed a bit higher to eliminate the flicker that some people still perceived.

How an image is rendered on a progressive display vs. an interlaced display

As people moved away from the older CRT displays, the system changed from interlaced to progressive scan because the new digital displays are much faster. In a progressive scan, the lines are painted on the screen in sequence rather than first the odd lines and then the even lines. If you want to translate, 1080p, for example, is used for displays characterized by 1080 horizontal lines of vertical resolution and a progressive scan. There’s a rather eye-boggling illustration of the differences between progressive and interlaced scans in this Wikipedia article: Progressive scan. For another fascinating history lesson, you can also read about the Interlaced video.

Furthermore, while on CRT displays, content with lower or higher frame rates didn’t matter that much in terms of perceived image quality, on LED screens, the frame rate is even more important. The relation between frame rate and refresh rate is relevant for both gaming performance, video content rendering, and daily user experience. While the refresh rate (Hz) is the number of times an image is updated by the display panel every second, the frame rate (FPS) is the number of times an image is rendered by the graphics card every second.

A higher frame rate paired with a higher refresh rate can improve visual quality and make everything look more responsive. However, the frame rate depends on the performance offered by your computer, smartphone, TV, or similar device, while the refresh rate depends on the monitor’s capability. Ideally, the frame rate shouldn’t exceed the refresh rate; otherwise, screen tearing may occur.

Do you notice the screen tearing effect?

Image source: Wikipedia

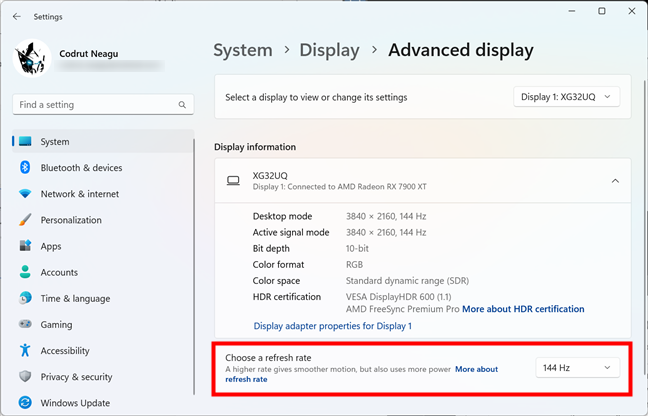

Thanks to the advancements made in graphics cards and processors, the display panels we find nowadays on computer monitors, laptops, smartphones, tablets, and even TVs are offering increased refresh rates. Because the performance of our devices allows them to deliver more frames per second, screens have to keep up with them. And that’s why today, we have displays with refresh rates that go from standard 60 Hz up to much higher ones, like 120 Hz, 144 Hz, 240 Hz, and even 360 Hz.

A gaming monitor with a refresh rate of 144 Hz

TIP: If you want to see or set the refresh rate on your PC, check these guides, depending on your Windows version: Where to find the Windows 10 refresh rate. How to change it or How to change the refresh rate in Windows 11.

What about the numbers? Understanding what screen resolution sizes mean (720p, 1080p, 1440p, 2K, 4K, 8K)

Manufacturers developed a shorthand to explain display resolution sizes when high-definition TVs became the norm. The most common numbers you see today are 1080p resolution (1920 x 1080), 1440p resolution (sometimes also referred to as 2K resolution), 720p resolution (HD), 4K, and 8K. As we have seen, the “p” and the “i” tell you whether it’s a progressive or interlaced scan display. Moreover, this shorthand is sometimes used to describe computer monitors as well, even though, in general, a monitor is capable of a higher definition display than a TV. The number always refers to the number of horizontal lines on the display.

Here’s how the shorthand translates:

- 720p resolution is known as HD or HD Ready resolution and usually means that the display has 1280 x 720 pixels.

- 1080p resolution is also known as FHD or Full HD resolution and typically refers to displays with 1920 x 1080 pixels.

- 1440p resolution is commonly referred to as QHD or Quad HD resolution, and it is typically seen on gaming monitors and high-end smartphones. 1440p (usually 2560 x 1440 pixels) is four times the resolution of 720p HD or HD ready. To make things even more confusing, many premium smartphones feature a so-called 2960 x 1440 Quad HD+ resolution, which still fits into 1440p.

- 4K or 2160p resolution (regularly 3840 x 2160 pixels) is also known as UHD or Ultra HD resolution. It is a huge display resolution, found on premium Smart TVs and computer monitors. 2160p is called 4K because the width is close to 4000 pixels. In other words, it offers four times the number of pixels in a 1080p FHD or Full HD screen.

- 8K or 4320p (generally 7680 x 4320 pixels) offers 16 times more pixels than the standard 1080p FHD or Full HD resolution. For now, you get 8K only on expensive TVs like the ones made by Samsung or LG. However, you can test whether your computer can render such a large amount of data using this 8K video sample:

The problem with 2K is that it doesn’t exist for consumer devices

In cinematography, the 2K resolution exists, and it refers to 2048 x 1080. However, in the consumer market, it would be considered 1080p. To make things worse, some display manufacturers use the term 2K for resolutions like 2560 x 1440 because their displays have a horizontal resolution of 2000 pixels or more. Unfortunately, that is incorrect, as this resolution is 1440p or Quad HD, but not 2K.

A cinema screen with a 2K resolution

Therefore, when you hear about a TV, computer monitor, smartphone, or tablet having a 2K resolution, you should research the display further. Its real resolution is likely to be 1440p or Quad HD.

Can you see high-resolution videos on lower-resolution screens?

You might wonder whether you can watch a high-resolution video on a smaller-resolution screen. For example, is it possible to use a 720p TV to watch a 1080p video? The answer is yes! Regardless of your screen resolution, you can use it to watch any video with any resolution (higher or lower). However, if the video you want to watch has a higher resolution than your display, your device converts the video’s resolution to one that fits the resolution of your display. This is called downsampling.

For example, if you want to watch a video with a 4K resolution on a 720p screen, that video is shown at a 720p resolution because that is all your screen can offer.

What is the Aspect Ratio?

The aspect ratio term was initially used in motion pictures, indicating how wide the picture was in relation to its height. Movies were initially recorded in a 4:3 aspect ratio; this carried over into television and early computer displays. The motion picture aspect ratio changed much more quickly to a wider screen, which meant that when movies were shown on TV, they had to be cropped, or the image had to be manipulated in other ways to fit the TV screen.

The same picture in 16:9 vs 4:3 aspect ratio

As display technology improved, TV and monitor manufacturers also began to move toward widescreen displays. Originally “widescreen” referred to anything wider than the typical 4:3 display, but it quickly came to mean a 16:10 ratio and later 16:9. Nowadays, nearly all computer monitors and TVs are only available in widescreen, and TV broadcasts and web pages have adapted to match.

Until 2010, 16:10 was the most popular aspect ratio for widescreen computer displays. However, with the rise in popularity of high-definition televisions, which used resolutions such as 720p and 1080p and made these terms synonyms with high definition, 16:9 has become the high-definition standard aspect ratio.

Depending on the aspect ratio of your display, you can use only resolutions specific to its width and height. Some of the most common resolutions that can be used for each aspect ratio are the following:

- 4:3 aspect ratio resolutions: 640x480, 800x600, 960x720, 1024x768, 1280x960, 1400x1050, 1440x1080, 1600x1200, 1856x1392, 1920x1440, and 2048x1536.

- 16:10 aspect ratio resolutions: 1280x800, 1440x900, 1680x1050, 1920x1200, and 2560x1600.

- 16:9 aspect ratio resolutions: 1024x576, 1152x648, 1280x720 (HD), 1366x768, 1600x900, 1920x1080 (FHD), 2560x1440 (QHD), 3840x2160 (4K), and 7680 x 4320 (8K).

In recent years, many smartphone manufacturers also adopted taller aspect ratios. Nowadays, you can find mobile phones with exotic aspect ratios such as 18:9, 19:9, 19.5:9, 20:9, and 21:9. Or, if you’re a fan of foldable smartphones, you might be surprised to find out that they generally have squarish screens with aspect ratios as weird as 6:5. While using such aspect ratios on smartphones allows them to offer larger screens and more immersive viewing experiences, they can also make watching movies, videos, and other types of content problematic. That’s because video content often doesn’t fit well on such screens, requiring zooming or cropping.

The SAMSUNG Galaxy Z Fold 5 has an aspect ratio of 10.81:9

Is there a relation between aspect ratio and display orientation?

The display orientation refers to how you look at a screen: landscape and portrait are the most common screen orientations. Landscape orientation means the screen’s width is larger than its height, while portrait orientation means the opposite. Most large screens, such as those on our computers, laptops, or TVs, use landscape orientation. Smaller screens, such as the ones on our smartphones, are normally used in portrait mode, but because their size allows you to rotate them easily, they can also be used in landscape mode. The screen’s aspect ratio defines the ratio of its longer side to its shorter side. Consequently, that means that the screen’s aspect ratio tells you the ratio of the width to height when you look at it in landscape mode. The aspect ratio is not used to describe screens (or any rectangular shapes) in portrait mode.

Different screens with different display orientations

In other words, you could say that an aspect ratio of 16:9 is the same as 9:16, but the latter is not an accepted form of referring to aspect ratio. However, you can refer to the screen resolution in both ways. For example, a resolution of 1920 x 1080 pixels is the same as 1080 x 1920 pixels; it is just that the orientation differs.

How does the size of the screen affect resolution?

Although a 4:3 TV display can be adjusted to show black bars at the top and bottom of the screen while a widescreen movie or show is being displayed, this does not make sense with a monitor, so Windows does not even offer you the widescreen display as a choice. You can watch movies with black bars like on a TV screen, but your media player or web browser is the one that does this automatically.

A 4:3 aspect ratio movie on a 16:9 display

The most important thing is not the monitor size but its ability to display higher-resolution images. The higher you set the resolution, the smaller the images on the screen are, and there comes a point when the text on the screen becomes too small to read. On a larger monitor, it is possible to push the resolution very high indeed, but if that monitor’s pixel density is not up to par, you won’t get the maximum possible resolution before the image text becomes unreadable. In many cases, the screen does not display anything at all if you tell Windows to use a resolution that the monitor cannot handle. In other words, do not expect miracles out of a cheap monitor. When it comes to high-definition displays, you definitely get what you pay for.

Do you have any other questions about screen resolutions?

If you are not technically inclined, you are likely confused by the many specs of displays and resolutions. Hopefully, this article helped you understand the most essential characteristics of a display: aspect ratio, resolutions, or type. If you have any questions, please ask in the comments section below. And, if you want more helpful content like this article, don’t hesitate to subscribe to our newsletter. You’ll find the form for doing so just a bit lower.

28.08.2023

28.08.2023